What Is a Foundation Model in Generative AI

Table Of Content

Published Date :

19 Mar 2026

Key Takeaways

- Foundation models are general-purpose AI systems trained at scale.

- They reduce time and cost of AI implementation.

- They enable multi-department automation.

- Governance and integration planning are critical.

- Strategic adoption delivers measurable ROI.

In the early 2020s, artificial intelligence was often treated as a "special project"—a niche tool tucked away in R&D labs or experimental pilot programs. Fast forward to 2026, and the landscape has shifted fundamentally. AI is no longer a bolt-on feature; it has become the digital foundation upon which the modern US enterprise is built.

At the heart of this transformation lies the Foundation Model.

If you’ve used a sophisticated chatbot, generated a marketing image, or automated a complex coding task recently, you’ve interacted with a foundation model. But for business leaders, these models are more than just "smart tech."

Most explanations out there are either too technical or too vague to be useful for someone making real business decisions. That's a problem, because understanding what a foundation model in generative ai actually is can directly influence how companies invest, what vendors they choose, and how fast organizations move.

This blog breaks it down in a clear, business-first perspective on what these models are, how they work, why they matter, and what you should do about them.

Executive Overview

Traditional machine learning models were typically built for one narrow purpose. Fraud detection. Demand forecasting. Churn prediction. Each required separate data pipelines, model training cycles, and maintenance budgets. Foundation models change that equation. They are trained once at massive scale and then adapted to different use cases through configuration, prompting, or fine-tuning.

Before allocating capital, business leaders should evaluate three realities:

- These models reduce development timelines dramatically.

- They require governance and data oversight.

- They alter long-term AI roadmaps from project-based to platform-based thinking.

Generative AI In Business Context

Generative AI refers to systems that create new content or outputs based on patterns learned from large datasets. That content may include text, code, reports, product descriptions, design drafts, or structured responses to customer queries. For executives, the relevance lies in productivity and responsiveness rather than technical novelty.

Consider a mid-sized U.S. insurance firm handling 40,000 customer emails per month. If even 60 percent of those inquiries follow predictable patterns, automated response drafting can reduce response times from hours to minutes. On top of that, internal teams gain time for complex cases instead of repetitive tasks.

Enterprise use cases are already practical and measurable:

- Content automation for marketing and knowledge management

- Intelligent customer support augmentation

- Analytics summarization for executive reporting

- Code generation to accelerate product releases

Adoption across healthcare, finance, manufacturing, and retail is accelerating because these systems plug into existing processes rather than replacing them entirely. When aligned with AI integration strategies, generative tools become force multipliers instead of isolated experiments.

What Is Foundation Model?

At its core, a foundation model is a large AI system trained on massive volumes of diverse data so it can perform many tasks without being retrained from scratch each time. Instead of building separate models for writing, summarizing, translating, coding, or analyzing, businesses leverage one adaptable intelligence layer.

These models are pre-trained at scale using billions of data points. That pretraining enables contextual understanding. They recognize patterns, language structures, intent, and relationships across domains.

Key characteristics include:

- Multi-purpose adaptability across industries

- Ability to generate and interpret text, images, or code depending on model type

- Rapid customization through fine-tuning or prompt engineering

Examples include large language models used for enterprise knowledge assistants, multimodal systems capable of processing text and images, and code generation engines that accelerate development cycles.

For executives evaluating what is the foundation model in generative ai, the most important takeaway is this: it is infrastructure-level intelligence. It becomes part of a company’s digital backbone, not just another tool in its tech stack.

Want to unlock efficiency with foundation models today?

Understand how foundation models can streamline operations reduce costs and accelerate decision making across your organization with tailored implementation strategies.

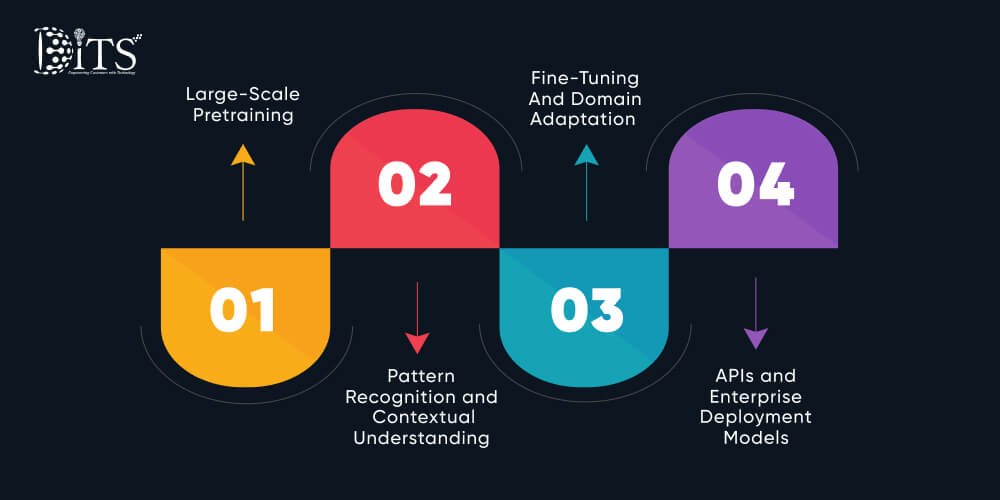

How Foundation Models Work

Understanding mechanics helps demystify cost and deployment expectations.

Large-Scale Pretraining

These systems are trained on extensive datasets, often including books, documentation, structured data, and domain-specific materials. The objective is to learn general patterns rather than memorize specific answers.

Pattern Recognition and Contextual Understanding

The model identifies relationships between words, concepts, and structures. Over time, it develops the ability to generate coherent responses and infer intent from incomplete information.

Fine-Tuning And Domain Adaptation

Enterprises can adapt the model using internal datasets. A healthcare organization may train it further on clinical documentation. A financial institution may refine it for compliance monitoring. This stage increases accuracy in specialized environments.

APIs and Enterprise Deployment Models

Most businesses access these models through APIs, embedding them into applications, CRM systems, analytics dashboards, or internal portals. Others choose private hosting for enhanced data control. When integrated properly within broader AI software development frameworks, they support scalable enterprise solutions without rebuilding architecture from zero.

The operational takeaway is clear. These systems are not magic. They are engineered layers of intelligence that can be strategically configured to support measurable business outcomes.

Foundation Model in Generative AI Vs Traditional AI Models

Executives often ask whether this is simply an upgraded version of existing analytics models. It is not. The structural difference changes how technology investments are planned and scaled.

Traditional AI systems were task-specific. If you wanted churn prediction, you built a churn model. If you wanted invoice classification, you built another model. Each project required data preparation, model design, testing cycles, and maintenance teams. Costs multiplied quietly.

A foundation model in generative ai works differently. It is general-purpose. Once trained, it can handle multiple downstream tasks with configuration rather than full redevelopment.

Below is a simplified comparison for decision-makers:

| Criteria | Traditional AI Models | Foundation Models |

| Scope | Single-task | Multi-task, cross-functional |

| Development Time | 3 to 12 months per use case | Weeks for adaptation |

| Infrastructure | Separate pipelines | Shared intelligence layer |

| Scalability | Linear expansion | Exponential reuse potential |

| Long-Term Roadmap | Project-based | Platform-based |

Impact on long-term AI roadmap is significant. Instead of approving isolated AI initiatives annually, leadership can invest in a centralized intelligence layer that supports marketing, operations, legal, finance, and product development simultaneously.

That shift alone changes budgeting, governance, and integration strategy.

Business Benefits of Gen AI Foundation Models

For U.S. enterprises operating under margin pressure, benefits must translate into financial or operational advantage. Otherwise, enthusiasm fades quickly.

Faster Time to Market

Product teams using AI-assisted code generation have reduced development timelines by 20 to 40 percent. Releases accelerate without proportional hiring.

Reduced Development Costs

Rather than building separate machine learning systems, companies reuse a shared model backbone. That consolidation lowers infrastructure duplication and maintenance expenses.

Cross-Functional Scalability

One intelligence layer can support HR document drafting, compliance review, sales proposal generation, and customer chat workflows. This is where business workflow automation becomes practical rather than theoretical.

Enhanced Customer Experience

Real-time response drafting and intelligent personalization reduce wait times and increase satisfaction. Nobody enjoys waiting 48 hours for a simple answer.

Competitive Differentiation

Early adopters are embedding intelligent features directly into products. In many industries, customers now expect AI-powered assistance as standard functionality.

When implemented strategically through structured AI integration, foundation systems evolve from operational support tools into growth accelerators.

Is your AI strategy missing scalable intelligence layer?

Identify gaps in your current AI strategy and uncover opportunities to leverage foundation models for automation efficiency and competitive advantage.

Industry-Specific Applications In US Market

Healthcare

Automated clinical documentation and patient communication assistants reduce administrative workload. Hospitals have reported up to 30 percent reduction in physician documentation time when systems are carefully implemented.

Finance

Risk assessment summaries, compliance monitoring, and regulatory report drafting become faster and more consistent. Audit preparation cycles shorten significantly.

Retail And Ecommerce

Personalized product recommendations, dynamic content creation, and demand forecasting enhancements improve conversion rates and reduce inventory misalignment.

Manufacturing

Operational logs can be summarized instantly, and predictive insights extracted from maintenance records help prevent costly downtime. Even a single avoided production halt can justify investment.

Legal And Professional Services

Document review, contract analysis, and case summarization accelerate turnaround without compromising oversight.

Across industries, the pattern remains consistent. Foundation intelligence becomes embedded in workflows, not layered awkwardly on top.

Deployment Options for Enterprises

Deployment decisions determine risk exposure and long-term scalability.

Using Public APIs

Software-as-a-service access allows rapid experimentation with lower upfront investment. Ideal for pilots and early adoption.

Private Model Hosting

Enterprises with strict data governance often prefer controlled environments for sensitive workloads.

Fine-Tuning Vs Prompt Engineering

Prompt engineering allows faster configuration without retraining. Fine-tuning increases domain precision but requires curated data and oversight.

On-Premise Vs Cloud Infrastructure

Cloud environments offer scalability and flexibility. On-premise deployment may satisfy regulatory or security mandates.

Security And Compliance Considerations

Industries such as healthcare and finance must align deployments with HIPAA, SOC 2, and other compliance standards. Governance frameworks should be established before scaling usage.

Strategic deployment planning often benefits from structured IT consulting services to align technical decisions with operational objectives.

Build Vs Buy Strategic Decision Framework

Every executive eventually confronts this question. Should we develop internally, customize existing models, or partner externally?

When To Use Existing Foundation Models

For common use cases such as document summarization, internal knowledge assistants, or customer support augmentation, existing models often deliver sufficient accuracy with configuration.

When To Customize Or Fine-Tune

Highly regulated or domain-specific industries may require refinement using proprietary data. This improves precision and contextual alignment.

When Enterprise AI Development Services Become Necessary

If integration complexity, security requirements, or scalability objectives exceed internal capability, external expertise accelerates execution while reducing technical debt.

ROI Evaluation Factors

Leadership should evaluate:

- Reduction in manual workload

- Acceleration in product release cycles

- Revenue uplift from enhanced customer experience

- Infrastructure cost savings

At DITS, we approach generative systems as part of broader AI software development initiatives. We use AI internally for software development, quality assurance, maintaining code quality, and customization workflows. More importantly, we integrate AI into every software solution we build, ensuring intelligence is embedded rather than added as an afterthought.

Why Choose DITS For Generative AI Development

Enterprise AI initiatives require more than model access. They demand architecture design, system integration, governance alignment, and performance optimization.

DITS delivers structured AI integration aligned with operational objectives. Our teams combine engineering expertise with domain understanding, ensuring generative capabilities connect seamlessly with enterprise platforms.

We embed intelligence across applications, enabling scalable business workflow automation while maintaining code quality and system reliability. AI is not layered on top. It is built into the foundation of every solution.

The outcome is controlled scalability, reduced deployment risk, and measurable performance gains.

Need help deploying foundation models securely at scale?

Get expert guidance on deployment governance and integration ensuring your foundation model initiatives align with compliance performance and growth objectives.

Conclusion

Generative systems are redefining enterprise technology strategy, but sustainable value depends on understanding infrastructure, governance, and integration realities.

A foundation model in generative ai represents reusable intelligence at scale. It shifts AI from isolated projects to enterprise platforms capable of supporting multiple departments simultaneously.

For U.S. businesses navigating cost pressure and competitive acceleration, strategic adoption can deliver tangible ROI within defined timelines. The opportunity is substantial. The execution discipline determines results.

FAQs

How much does it cost to implement foundation models?

Costs vary based on usage volume, customization level, and deployment method. Pilot programs may start in the low five-figure range, while enterprise-wide integration can scale significantly depending on infrastructure and compliance requirements.

Do we need large internal datasets?

Pretrained systems already possess broad knowledge. Internal datasets improve domain accuracy but are not mandatory for basic implementation.

Are foundation models secure for enterprise use?

They can be secure when deployed with appropriate governance, access controls, and compliance alignment. Private hosting options increase control for regulated industries.

How long does deployment take?

Initial pilots can be operational within 6 to 12 weeks. Broader enterprise integration may require several months depending on complexity.

How does DITS support enterprise generative AI development initiatives?

DITS delivers end-to-end generative ai development services, beginning with use case identification and architecture planning, followed by secure model integration, customization, and performance optimization. Our teams embed intelligence directly into enterprise platforms rather than treating AI as an add-on feature.

Can DITS customize foundation models for industry-specific requirements?

DITS evaluates regulatory constraints, operational workflows, and data maturity before recommending customization strategies. Depending on business objectives, we implement prompt engineering, fine-tuning, or private hosting configurations. Our generative ai development services ensure that solutions align with compliance standards, integrate seamlessly with existing systems.

Dinesh Thakur

21+ years of IT software development experience in different domains like Business Automation, Healthcare, Retail, Workflow automation, Transportation and logistics, Compliance, Risk Mitigation, POS, etc. Hands-on experience in dealing with overseas clients and providing them with an apt solution to their business needs.

Recent Posts

Explore how conversational AI transforms insurance operations by reducing costs, improving claims processing, enhancing customer experience, and enabling scalable, efficient, and data-driven decision making capabilities.

Healthcare app development cost in USA explained. Explore pricing, key factors, and tips to plan your healthcare app budget effectively.

A curated list of top product engineering companies in USA with AI expertise, helping businesses compare capabilities, costs, and choose the right development partner for scalable solutions.